URL Extractor Tool

Find and extract all URLs from your text with one click

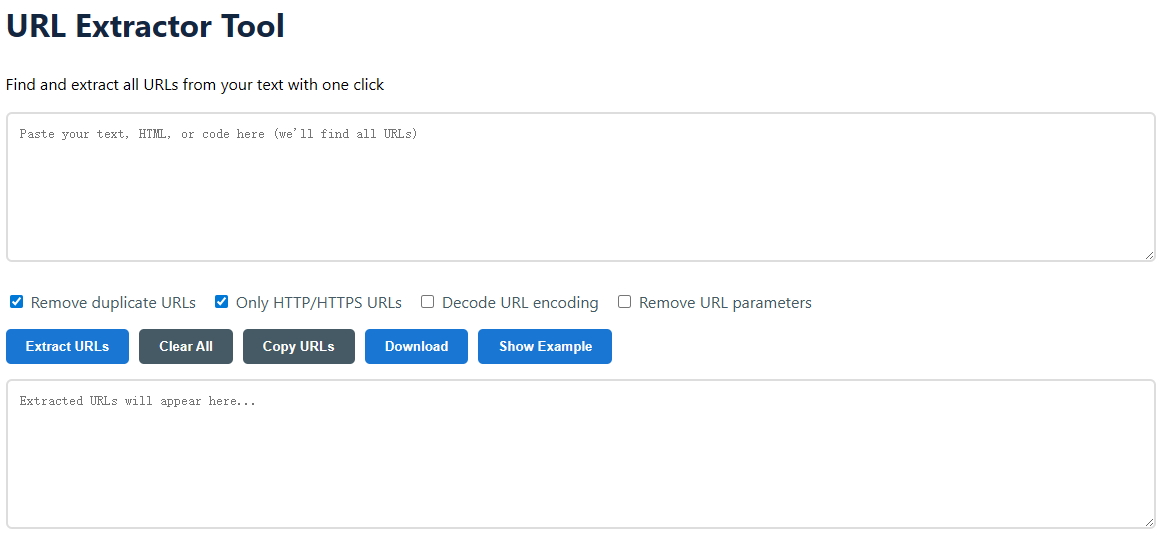

How to Use the Free URL Extractor Tool

Our URL Extractor is a powerful, browser-based utility designed to instantly parse and isolate all web addresses from any text block. Whether you're a developer sifting through HTML, a marketer auditing backlinks, or a researcher compiling sources, this tool simplifies a tedious manual process into a single click. It's completely free, requires no registration, and processes your data securely in your browser without sending it to any server. Follow the simple steps below to transform messy text into a clean, actionable list of URLs.

- Paste Your Source Text

- Configure Your Extraction Options

Remove duplicate URLs: Filters out identical links for a clean list.Only HTTP/HTTPS URLs: Focuses on standard web links, excluding mailto: or ftp:.Decode URL encoding: Converts characters like %20 back into spaces.Remove URL parameters: Strips query strings (?id=123) to get the base URL.

- Click "Extract URLs"

- Copy, Download, or Analyze

Common Use Cases for Extracting URLs

Manually hunting for links is inefficient and error-prone. This tool automates the task for a wide range of professional and personal applications. From SEO audits to academic research, extracting URLs is a fundamental step in data organization and analysis. Here are the most frequent scenarios where our URL Extractor delivers significant value.

- SEO & Digital Marketing: Audit backlink profiles by extracting URLs from competitor site exports or third-party tool reports.

- Web Development & Debugging: Quickly find all external resources (scripts, stylesheets, images) within HTML, CSS, or JavaScript code.

- Content Management & Migration: Identify all internal and external links in a batch of articles before moving a website to a new platform.

- Academic Research & Citation: Compile a bibliography of source URLs from research notes or draft papers.

- Data Scraping & Analysis: Clean and prepare raw scraped data by isolating the core URLs from surrounding text and HTML tags.

- Social Media & Community Management: Extract shared links from community forums, comment sections, or social media export files.

- Security Analysis: Review logs or reports to isolate potentially malicious domains or phishing links mentioned in text.

- Personal Organization: Gather all website links from a lengthy document, email thread, or note into one manageable list.

What URL Formats Can Be Extracted?

The tool uses a sophisticated regular expression (regex) pattern to identify a broad spectrum of Uniform Resource Locators. It's designed to recognize not just perfect, fully-qualified web addresses but also common variations and encodings found in real-world text. Understanding what it can detect helps you interpret the results accurately.

Standard Web URLs

https://www.example.com/pagehttp://blog.example.co.uk/article?id=5https://example.com:8080/path/

Common Variations

www.example.com(Protocol-relative)//cdn.example.net/asset.js(Scheme-relative)subdomain.example.org/path/file.pdf

Encoded & Complex

https://exa%6Dple.com/(Obfuscated)-> https://example.com/http://example.com/file%20name.txt(URL-encoded spaces)example.com/page#section(With fragment identifier)

Advanced Features & Technical Capabilities

Beyond simple extraction, this tool includes several intelligent processing options to refine your results. These features handle common data cleaning tasks automatically, saving you time in post-processing. Each option is designed to solve a specific problem encountered when working with URLs extracted from unstructured data.

Duplicate Removal

Identifies and eliminates repeated URLs, ensuring your final list is unique. This is crucial for analytics, link building, and sitemap generation where counting the same link multiple times skews the data.

Protocol Filtering

When "Only HTTP/HTTPS URLs" is enabled, the tool filters out non-web links such as mailto:[email protected], ftp://server.com, and tel:+1234567890, giving you a clean list of web pages.

URL Decoding

Converts percent-encoded characters back to their standard form. For example, %20 becomes a space, and %2F becomes a forward slash, making URLs human-readable and consistent.

Parameter Stripping

Removes query strings (everything after the ?) and fragment identifiers (after the #). This helps in canonicalization, grouping page variants, and analyzing the core page structure.

Example: Input vs. Extracted Output

Seeing the tool in action clarifies its power. Below is a sample of mixed content containing plain text, HTML, and a malformed URL. The right column shows the clean, processed output after applying all available options (duplicates removed, only HTTP/HTTPS, decoded, parameters stripped).

| Input Text (HTML & Mixed Content) | Extracted & Cleaned URL List |

|---|---|

Check out our blog at https://blog.example.com and our main site: http://www.example.com. Here's a link: <a href="https://example.com/shop?id=123&ref=abc">Shop Now</a> Don't forget http://EXAMPLE.COM/About-Us. Contact us via mailto:[email protected]. A broken mention: htt://bad-url.com. Encoded link: https://ex%61mple.com/file%20name.doc | https://blog.example.com http://www.example.com https://example.com/shop http://example.com/About-Us https://example.com/file name.doc |

Why Choose Our Online URL Extractor?

Many online tools perform basic functions, but ours is built with precision, user experience, and data integrity in mind. We prioritize a seamless workflow that respects your time and privacy. Here are the key benefits that set our tool apart from manual methods or other utilities.

- 100% Client-Side Processing: Your data never leaves your computer. All extraction happens instantly in your browser, ensuring complete privacy and security.

- No Limits or Registration: Use it as much as you need, for free. There are no character limits, daily quotas, or sign-up requirements.

- Batch Processing Power: Handle large documents, code files, or data exports with ease, extracting thousands of URLs in milliseconds.

- Intelligent Cleaning Options: The built-in filters (deduplication, decoding, etc.) perform complex data cleaning that would take minutes manually.

- Direct Export Options: One-click copying to clipboard or downloading as a .txt file integrates seamlessly into your existing workflow.

Frequently Asked Questions (FAQ)

Have questions about how the tool works or its capabilities? Below are answers to the most common queries we receive. If your question isn't covered here, the tool's interface and options are designed to be intuitive and self-explanatory through direct interaction.

Is my data safe when using this tool?

Absolutely. The entire extraction process runs locally in your web browser using JavaScript. No text you paste is uploaded to any server, logged, or stored. You can verify this by disconnecting your internet after loading the page; the tool will still function perfectly.

Can it extract URLs from PDF or Word documents?

Yes, but with one step. First, you must extract the text from the PDF or Word file. You can typically do this by selecting all text (Ctrl+A/Cmd+A) and copying (Ctrl+C/Cmd+C) from within the document viewer or editor. Then paste that copied text into our tool to extract the URLs.

What does "Decode URL encoding" do?

URLs often replace special characters (like spaces, ampersands, or non-ASCII letters) with a percent sign (%) followed by hexadecimal codes. This option converts those codes back to the original readable characters. For instance, %20 becomes a space, and %C3%A9 becomes "é".

Why are some URLs not extracted?

The tool is designed for accuracy over guesswork. It may miss URLs that are severely malformed (e.g., missing dots like "http://example"), obfuscated with intentional typos, or written in plain text without a protocol (like "example.com") if they are part of a sentence without clear boundaries. Enabling all filters provides the cleanest results for standard web links.